Sliding down into delusion is seductive, easy, and fun. Modern information technology is making it ever harder to resist. Staying sane, on the other hand, is hard work—and it is getting harder every day.

The internet has made it possible for infectious ideas to spread faster than any physical disease. For a virus to circle the globe, you need mutations and air travel. To become infected by fake news and dangerous ideas, you need only a Wi‑Fi connection. Modern technology exposes us to vastly more information than ever before, much of it unhealthy, and every time our neural networks are exposed to bad information, it feels a bit more sensible to us—even if we know it is fake. Mere repeated exposure wears ever‑deepening grooves of familiarity into our brains. The more we see, hear, and click on a claim, the more reasonable it feels. Eventually, insidiously, it becomes self‑evident—common sense that seems inescapable.

In the past, news was filtered through human editors and gatekeepers. They certainly had their biases and blind spots, but at least someone was nominally responsible for quality. Today, sources like Facebook, Fox News, YouTube, podcasts, X/Twitter, and even our government have largely abandoned any obligation to fact‑check before amplifying. They create the illusion of informed reporting but are often almost completely untethered to reality. Their algorithms and personalities have one overriding job: keep you engaged. They notice what you watch or click and then say, in effect, “If you believe that, then check this out!” They do not care whether they are feeding you solid science or the latest conspiracy theory; they only care whether you will stay tuned in and click some more. The responsibility to sort out well‑supported information from unsupported claims, sound logic from specious arguments, is pushed entirely onto you.

That would be a tall order even if our brains were perfectly rational. They aren’t. Imagine you are curious about a fringe idea like Bigfoot. You type “proof of Bigfoot” into a search engine or social platform, intending to investigate skeptically. You will quickly find articles, videos, posts, and even reality shows arguing that Bigfoot is at least plausible or even real. Because you clicked, the algorithms learn that Bigfoot content “works” on you and begin to serve you more of it: more sightings, grainy photos, confident testimony. Before long, your feed is heavily populated by Bigfoot believers. From your perspective, it starts to look as if there is an enormous body of evidence out there. Everywhere you look, people treat the idea seriously. If so many people think there is something to it, there must be something to it.

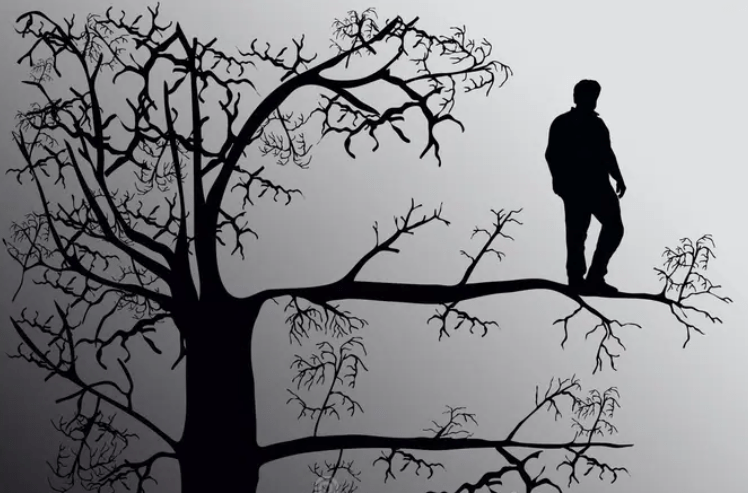

In reality, you are being drawn out onto ever thinner and more dangerous limbs. The algorithm nudges you along in little steps, each of which seems perfectly solid and reasonable. This process does not just happen with Bigfoot. It happens with vaccine myths, climate denial, election lies, cultish political beliefs, and every other infectious or click‑inducing idea. The result is that many people come to feel they have made a careful, “objective” study of an issue when in fact they have been drawn, step by step, down a rabbit hole into an Alice in Wonderland alternate reality.

We cannot redesign the global information system by ourselves, but we can develop habits that make us harder to capture. One simple practice is to explicitly search for the reverse of whatever you are investigating. If you search for “proof of Bigfoot,” deliberately also search for “debunking Bigfoot claims,” and click on those results often enough that the search engines learn you will reliably choose that kind of content too. This at least gives you some exposure to different perspectives. Both sides might still be exaggerated, but you are less likely to be left with the illusion that everyone agrees with one side only.

Another, related technique is to always look back to first principles. If you only consider that next little step out along the branch, it will seem safe and sensible. But if you stop and look back at how far you have wandered from the solid trunk, you quickly realize that you are dangerously far out on a limb. Having acknowledged that we do occasionally discover new species, must really therefore admit that a hitherto undiscovered tribe of Bigfoot might actually exist?

It also matters where you spend your time. Just as like‑minded people congregate in person, different online communities attract and cultivate different kinds of thinkers. Choose to frequent healthy online environments. That is not to say you should avoid diverse ideas; but if rumor, outrage, and unvetted claims infect the community or the platform itself, you will become infected. Seek out vibrant but serious gathering sites where people demand citations, scrutinize sources, and correct obvious nonsense. If you stick to them, your own brain will become better at recognizing sound evidence and logic, as well as specious arguments. If the level of discourse on a trusted site degrades, you should leave and stop exposing your brain to it.

Given all the infectious information we are unavoidably exposed to, it is no surprise that people sometimes slip from belief into delusion. Beliefs, at least in principle, are subject to change. We might hold them strongly, but new evidence can persuade us to reconsider. When a belief becomes impervious to change—when no amount of contrary evidence, no matter how strong or consistent, is allowed to matter—it has crossed over into delusion. Using that word makes many professionals uneasy. In a clinical setting, “delusional” has a specific meaning and diagnostic criteria. Nevertheless, in the generally accepted lay domain, delusion is the proper word to describe thinking patterns that have become impervious to evidence or reason.

When a person or a movement has fallen prey to delusional ideas, when contrary facts are dismissed out of hand or reinterpreted as attacks, we no longer function in the realm of honest disagreement. We are locked into a self‑reinforcing mental world that will not adjust to reality. In a culture where influencers dominate the discourse, the rest of us are put at risk. Delusions can be comforting, energizing, and politically useful, but facts always assert themselves in the end. Reality does not care if you believe in it.

As a result of so many infectious ideas being disseminated so quickly, we are currently suffering from a global pandemic of delusion. We cannot wipe it out, but we can protect ourselves and try not to contribute to its spread. We can monitor our own information diets, seek out counter‑evidence, choose better communities, learn to better assess claims, and be more precise in our language. We can and must resist being nudged toward delusion. As susceptible as our brains are to misinformation, they can also be trained to better assess the soundness of claims and to detect specious arguments.

The way repetition reshapes our memories and our very perceptions, the way algorithms exploit our pattern‑seeking brains, the way beliefs slide, inch by inch, into full‑blown delusion—all of these dynamics, and many others, are at work in our politics, our media, our religions, and our personal lives. In my book Pandemic of Delusion: Staying Rational in an Increasingly Irrational World (see here), I unpack those mechanics in much greater detail, with concrete examples and practical tools for recognizing when you, or someone you care about, is being nudged away from reality. If this short essay inspires you to want to bolster your defenses, the book will provide you with a practical field guide: offering insight as to why we are so susceptible to misinformation, how to recognize it, and how to immunize yourself against it. It will give you a fighting chance to stay sane when the world around you seems determined to drive you crazy.